Dull, dirty, and dangerous. For decades, the “Three D’s” were viewed as the ideal types of jobs for robots. A fourth D—domestic—was not so much in the discussion.

And for good reason, says Steve Cousins, PhD ’97, executive director of the Stanford Robotics Center. Early robots—like hydraulic arms—were dangers themselves. “For the first 40 years of robotics actually doing work in the world, robots were kept behind safety cages,” Cousins says. “They were designed to be strong and precise, not to be around people. Safety wasn’t on the list.”

We live in a different era now, of course, one in which driverless cars and delivery robots roll down city streets with the artificial good sense to do their job while—mostly—giving wide berth to humans. And Cousins thinks we’re on the cusp of another transformation, one in which robots won’t just have the awareness to avoid people but also the sophistication to work in increasingly close physical quarters with us in our personal lives. The age of the domestic robot nears.

Steve Cousins (Photo: Stanford School of Engineering)

Steve Cousins (Photo: Stanford School of Engineering)

Cousins is not imagining a luxury fleet of e-butlers but rather essential tools to navigate a looming shift in society. The United States is an aging country. By 2050, the number of Americans 65 and older is projected to reach 82 million, up more than 40 percent from 2022. By 2030, the number of Americans over 65 is expected to surpass the number under 18 for the first time in U.S. history, a generational imbalance likely to test caregiving resources as new retirees outnumber new workers as well as put additional pressure on the supply of suitable housing. Robots that can help people move, toilet, bathe, dress, socialize, or do chores offer a potential path to “aging in place,” with greater independence. “When we talk about domestic robotics, we really talk about robots to support aging and disabilities,” Cousins says.

One upside of the demographic crunch is that it’s focusing thought and investment in ways that will help the senior citizens of the future, says Abby King, a professor of epidemiology and population health who has spent decades studying health and aging. “Everything that’s developed for the baby boomers is going to be a wonderful asset for every other generation,” she says. In robots, she hopes for tools that preserve independence and social engagement to extend health as long as life. “Agency is so important as everyone gets older so that we feel we’re in charge of our lives and we don’t become infantilized.”

Cousins is inspired by a similar vision. A decade before returning to Stanford, he was CEO of a company that made a robot intended mostly as a research tool, but that a man named Henry Evans, MBA ’91, embraced. Evans, who sustained a stroke that left him paralyzed and unable to speak, used the robot to reclaim acts from shaving to playing games to scratching itches. Age will present many of us with physical challenges too, Cousins says. Some 46 percent of Americans aged 75 and older report having a disability, nearly four times the rate of those 35 to 64. Robots can help us adjust. “You look at Henry as this kind of extreme case,” Cousins says. “But the baby boomers are turning 80, and many of them will acquire disabilities as they age. Caregiving is the critical societal problem robotics has to solve.”

Here are four Stanford labs thinking about how.

Ruff Terrain

When Michelle Baldonado, PhD ’98, takes Rosie—aka Robot for Outdoor Socially Interactive Exercise—out on campus, the experience is like going for a walk with her dog, Jasko, a fluffy white Coton de Tulear. They’re both attention magnets that follow her wherever she goes. The robot, an augmented version of a device made by Boston-based Piaggio Fast Forward that resembles a minicooler on wheels, probably turns more heads. “If I’m out walking, I’m on everybody’s iPhone footage,” she says.

MULTI-TALENTED: Baldonado, right, thinks of how companion robots like Rosie can provide benefits from safety to companionship. (Photo: Stanford School of Engineering (2))

MULTI-TALENTED: Baldonado, right, thinks of how companion robots like Rosie can provide benefits from safety to companionship. (Photo: Stanford School of Engineering (2))

But Baldonado, a research engineer at the Robotics Center, wants a robot with more than canine cute cred. She is leading a flagship project at the robotics center called SOAR—Stanford Older Adult Robotics—that aims to give robots capabilities similar to those of guide dogs that serve people with low vision. By adding lidar and 360-degree cameras—the hardware at the heart of autonomous vehicles—as well as features like a raisable handle and a chatbot, Baldonado and her collaborators are seeking to enable companion robots to lead the way, warn about cracks, curbs, and uneven pavement, offer physical support, provide companionship, and even alert users if their gait looks unsteady. Rosie is the base for early navigation experiments, but the research team plans to build upon a four-wheeled quadruped, which can roll when practical and walk when needed.

Postdoctoral scholar Jing Liang, a researcher on the Rosie team working in collaboration with Stanford’s Center on Longevity, had once imagined putting his expertise in robot navigation into delivery robots, but he thinks this project will have more societal impact. It’s also an invigorating challenge. Compared with, say, an autonomous car, a robotic guide dog must be ready for more varied terrains, from stairs to grass to ornamental ponds. “We have a really specific group of people we can help,” he says. “That’s why I came here.”

The researchers scoped the project by talking to residents at senior living facilities such as Channing House in Palo Alto, where they got a vivid sense of how the fear of falling shrinks people’s lives, Baldonado says. Falls are the leading cause of injury for adults 65 and older, and simply the worry of them can be debilitating. “Even if they haven’t fallen, that fear alone can stop them from going out,” she says. “Once people stop going out and stop walking, you see a cascade of other health issues.” (Walking’s many benefits include decreased risks of cardiovascular and cerebro-vascular disease.)

Rhonda Bekkedahl, CEO of Channing House, says she thinks at least a third of the facility’s 250 residents would benefit from a robotic walking assistant. “If you want longevity, you have to maintain your mobility,” she says.

Baldonado looks at the issue from a research perspective, not a business one, but she says commercialization may not take long. “It’s realistic to imagine a next-generation outdoor walking companion robot becoming available in the next few years.”

Pep Walk

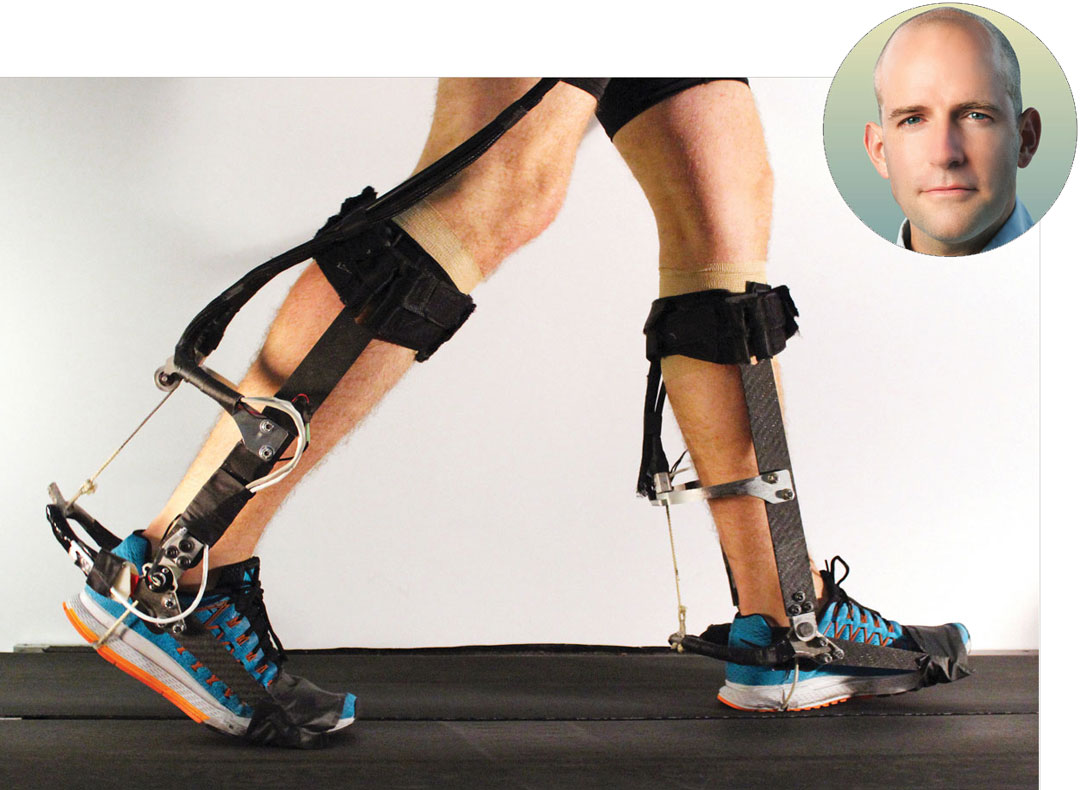

As we age, challenges to walking can come even before we go outside. “A main reason for relocation into an assisted living facility is an inability to navigate the stairs in your house,” says Steve Collins, associate professor of mechanical engineering, who develops wearable robotics and smart prosthetics to improve mobility. One of his lab’s most promising prototypes—an “ankle exoskeleton” that straps on the lower leg—literally gives users an extra pep in their step that makes it easier to do things that can trouble older walkers, and others with mobility issues, like climbing stairs.

But syncing a machine with a human is much harder than the Iron Man movies would suggest. As a grad student at the University of Michigan, Collins spent five years developing and testing a prosthetic foot designed to make walking easier for amputees. Mechanically speaking, the device worked perfectly, and yet the outcome was the opposite of what was desired. “Our fancy new prosthesis actually increased the effort of walking,” says Collins.

Indeed, the vast majority of robotic prosthetics and exoskeletons—wearable robots that help a person move—fail to deliver a boost, he says. It’s fiendishly difficult to marry a machine with the movements of a highly complicated, incredibly individualized body. “Humans are very, very complex,” he says. “Everyone is different, and they change over time. Even if we had devices that weighed nothing, had infinite power, and could produce as much torque and power as we wanted mechanically, we, in most cases, would struggle to help people.”

Once he established his own lab, Collins overhauled his research methods to better address this challenge. He moved away from building prototypes according to a set design only to discover flaws in the completed device, and toward a more iterative approach. Hardware and algorithms are constantly put through their paces under changing conditions by testers on a treadmill emulator and tweaked and retweaked accordingly. “We get more bad ideas quickly, until we stumble upon some good ideas,” he says.

The ankle exoskeleton is a fruit of that approach. The experimental device, which resembles a pair of leg braces around the feet and calves, uses a machine-learning model to adjust to a walker’s gait before providing a customized push as the user’s toes leave the ground. In outdoor tests on young adults, the exoskeleton allowed users to walk 9 percent faster with 17 percent less energy expended. Researchers likened the effect to taking off a 20-pound backpack. It was, they said, the first time an exoskeleton provided energy savings for real-world users. Treadmill tests on people in their 70s and 80s showed similar gains, Collins says.

STEADY NOW: The ankle exoskeleton, developed by the lab of Collins, right, aims to help users improve their mobility. (Photos, from left: Stanford Biomechatronics Lab; Stanford School of Engineering)

STEADY NOW: The ankle exoskeleton, developed by the lab of Collins, right, aims to help users improve their mobility. (Photos, from left: Stanford Biomechatronics Lab; Stanford School of Engineering)

Such assistance could give new range to older walkers worried about crossing intersections before the “walk” signal turns, fatiguing while away from home, or recovering from stroke. It could also help the 30 percent of Americans over 75 who have serious difficulty walking or climbing stairs.

The lab is working to develop other aspects of the exoskeleton, such as ways it can improve a user’s balance and reduce pressure on joints. Patrick Slade, MS ’17, PhD ’21, who was first author on a 2022 Nature paper about the device and who is now an assistant professor of bioengineering at Harvard, is optimistic about how quickly a product with similar technology could reach market. “Honestly, I think in the next five years you’ll be able to go to Target or Dick’s and buy a pair of these,” he says. “It’ll just be like a motorized pair of shoes.”

Dress for success

With a century’s worth of science fiction to draw on, many people imagine a robot to be a lot like a metallic human—say, C-3PO in Star Wars. And in fact, humanoids have lately made stunning leaps. Google “backflipping robot” for an example.

Allison Okamura, MS ’96, PhD ’00, tilts in another direction, especially in the realm of using robots to care for older people. “I’m a little anti-humanoid, actually,” she says. A mechanical engineering professor, Okamura subscribes to an idea espoused by roboticist Rodney Brooks, PhD ’81, that a robot’s appearance makes implicit promises about its capabilities. Today’s humanoids might flip like gymnasts, but they still struggle with tasks like emptying a dishwasher. “The way that kind of robot looks, people assume that it has human capabilities because it looks human—and they’re still very far from that,” she says.

Allison Okamura (Photo: Stanford School of Engineering)

Allison Okamura (Photo: Stanford School of Engineering)

By contrast, Okamura has an interest in smaller, task-specific robots designed to assist humans but not mimic them. Some scientists, for example, work on robot arms capable of dressing people. Okamura’s lab is rethinking the clothes themselves: What if the garments were the robots? One of her lab’s projects involves designing clothing made of self-assembling panels with automated zippers—a potential benefit for, say, someone with arthritic shoulders who has difficulty donning a shirt.

SMOOTH MOVES: Okamura’s lab has designed what she calls vine robots that can autonomously don and doff clothing. (Photo: Nam Gyun Kim (5))

SMOOTH MOVES: Okamura’s lab has designed what she calls vine robots that can autonomously don and doff clothing. (Photo: Nam Gyun Kim (5))

Okamura is a champion of “soft robotics” made with flexible, stretchable, and lightweight materials that can conform to the body and support it with a tenderness that’s difficult for traditional robotic components. “If the skin is soft, you don’t want to be pushing on it with something hard,” she says.

Indeed, some of her robots are like balloons. Okamura is a pioneer in vine robots: inflatable plastic tubes that can grow from about 1 foot to more than 200 feet. Imagine a balled-up sock that rapidly unfolds to a length 25,000 percent its original size (and that can then be neatly retracted). They are toylike in appearance, but their ability to snake through small spaces, around obstacles, and under weights with minimal friction gives them an ability to slip around heavy but fragile objects such as human bodies. In recent research with collaborators at MIT, Okamura demonstrated vines to be effective at curling under people to lift them out of bed.

Her lab has also developed a “magic sheet” that grows under a supine person, making it easier for, say, a single caregiver to move a partner or client. She wants to extend that model into more diverse, three-dimensional forms. When someone falls, she says, getting the person back up can be the hardest part. An inflatable robotic chair, with a vine’s ability to gently insinuate itself under the body, could help a spouse get their partner on their feet without a 911 call, she says.

“Part of my mission is to get people to rethink what a robot is,” she says. “You need to design the right robot for the right job.”

Again, with feeling

When the Stanford Robotics Center opened in the refurbished basement of the Packard Electrical Engineering Building in the fall of 2024, one of the media stars was TidyBot, a prototype designed to put away clutter. The mobile, one-armed robot, co-developed at Stanford, Columbia, and Princeton, used AI to take simple commands like “Yellow shirts go in the drawer” to extrapolate where similar items—such “light-colored shirts”—should go. In tests, it was able to correctly put away items from socks to snacks 85 percent of the time with much less data collection and training than required by other robots.

In the real world, we’re still waiting for a chore-bot that can fold the laundry, wash the dishes, or help someone get dressed as effectively as in the 2012 movie Robot & Frank, about a health-care bot sent to the home of an older man, says Monroe Kennedy III, an assistant professor of mechanical engineering who directs the Assistive Robotics and Manipulation Lab. Navigating the randomness of homes is part of the challenge. But so is the inimitability of the human hand, Kennedy says. Its 27 bones, 27 joints, and more than 30 muscles bestow a dexterity that remains hard to match. And it has sensitivity robots can’t equal. “Robots, to date, don’t really have the sense of touch that rivals the human sense of touch,” he says. It’s hard to trust a robot to bring you a glass of water if it can’t sense the glass slipping due to condensation.

Monroe Kennedy III (Photo: Stanford School of Engineering)

Monroe Kennedy III (Photo: Stanford School of Engineering)

Kennedy is working to change that touch deficit. His lab has developed robotic fingertips that use visual observation as a proxy for touch. Essentially, they feel by seeing. When the device picks something up with its soft, silicone tips, a camera inside each digit observes how much the fingertip’s surface distorts and, from that, detects the object’s texture and any tensions being applied to it, from twisting to sliding. The robot fingers are so sensitive that they can hold multiple strands of thread and distinguish how much each is being tugged, he says. They can pick up objects as small as basil seeds, which can challenge human hands. And they can handle fruit as fragile as blackberries without causing bruising. “With this fingertip we’re showing, well, we can actually do things now that were extremely difficult.”

TOUCHY: Kennedy, center, and his colleagues have developed robotic fingertips that feel by seeing. (Photo: Stanford School of Engineering)

TOUCHY: Kennedy, center, and his colleagues have developed robotic fingertips that feel by seeing. (Photo: Stanford School of Engineering)

There are many applications for such a technology, from agriculture to manufacturing. Kennedy thinks it could be a tool for older people in their homes. Its fine dexterity could help perform tasks like opening medicine bottles or picking up a dropped pill, and its gentleness could one day enable direct physical assistance in bathing or dressing. Progress requires more investment and more research, Kennedy says, but he’s optimistic we’re not far from a day when robots step in to make aging in place much easier. “We’re a lot closer than you think.”

Sam Scott is a senior writer at Stanford. Email him at sscott3@stanford.edu.