You probably know the Stanford origin story of Google, and, if you don’t, because of Google, you can Google the Stanford origin story of Google. It begins in 1995, when two graduate students—Larry Page, MS ’98, and Sergey Brin, MS ’95—created a search engine ranking the importance of individual web pages (if their original name had stuck, you wouldn’t Google things, you would Backrub them).

But Stanford’s tradition of innovation starts about a century earlier. By 1893, two years after the university opened its doors, a member of the Pioneer Class of 1895 named Clyde Patterson had reportedly secured a deal with music giant Lyon & Healy to manufacture a new musical instrument he called the mandolin-guitar and for which he planned to seek a patent.

Long before the start-up era took hold, Stanford faculty and students were dreaming up inventions that transformed (and in some cases established) domains as far-ranging as genetic engineering, nanotechnology, organ transplantation—even the internet itself.

Here are snapshots of nine of them.

The Klystron

Who: Physics research associates Russell, Class of 1925, MA ’27, and Sigurd Varian

When: 1937

Led to: Precise weather forecasts, military defense systems, deep space communications

Cars traveling at different speeds and bunching into groups on a highway. That’s the vision that came to Russell Varian as “an idea in the middle of the night.” But the cars were only a metaphor for something much more powerful: the microwave tube, or a way to generate electromagnetic radiation.

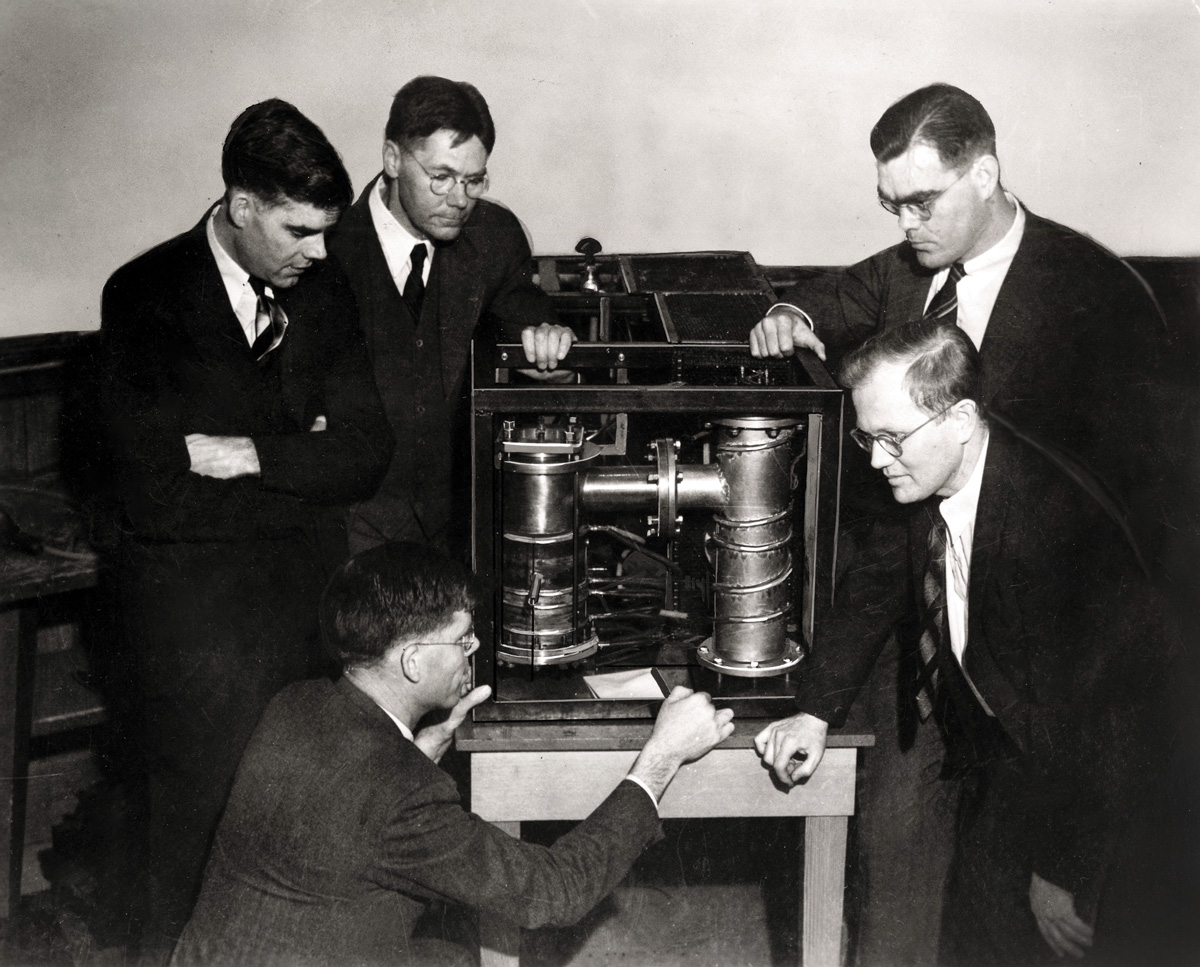

In 1937, Varian, Class of 1925, MA ’27, and his brother Sigurd, with $100 in funding from Stanford, built on the work of physics professor W.W. Hansen to construct a model of the technology that became known as the klystron: an organ pipe-like tube that produces electrons and bunches them into groups that emit microwave radiation waves, thereby powering instruments large and small, highly technical and everyday. National Weather Service radar relies on klystron tubes, as do air traffic control, television broadcasting, satellite communications, and radiation therapy equipment used to treat millions of cancer patients. (It’s not the gizmo that powers your microwave oven, though; that’s a magnetron.) “What’s been around for half a century, looks like a piece of plumbing, can weigh a ton or be held in the palm of your hand, and makes life a lot easier?” quipped Rich Cartiere of the Associated Press on the occasion of the device’s 50th anniversary.

TOTALLY TUBULAR: Clockwise from lower left: Russell Varian, Sigurd Varian, physics professor David Webster, Hansen, and graduate student John Woodyard, Engr. ’37, PhD ’41, with an early klystron. (Photo: Courtesy Special Collections & University Archives/Stanford Libraries)

TOTALLY TUBULAR: Clockwise from lower left: Russell Varian, Sigurd Varian, physics professor David Webster, Hansen, and graduate student John Woodyard, Engr. ’37, PhD ’41, with an early klystron. (Photo: Courtesy Special Collections & University Archives/Stanford Libraries)

The Varians adopted the name klystron—from the Greek klyzo, for the motion of waves breaking on the shore—on the recommendation of a Stanford classics professor. After patenting the device in 1939, Stanford sold the rights to a manufacturer. The United States and Britain later collaborated to use the technology for radar in Royal Air Force planes against Germany in World War II.

Klystron efforts have continued on and around the Farm ever since. Varian Associates, the company the Varian brothers founded in 1948 with Hansen, Russell Varian’s wife, and others, would soon become the first tenant of the Stanford Industrial Park. Hansen and two other Stanford faculty members produced the first multi-megawatt microwave technology—a klystron with a power output of 30 MW, exponentially higher than existing models—which paved the way for the manufacture of high-powered klystrons put to use in missile-detection systems during the Cold War. Today, klystron-powered research continues at the SLAC National Accelerator Laboratory, a partnership with the U.S. Department of Energy. The klystron gallery at the 2-mile-long building holds more than 150 of the devices, all accelerating electrons to nearly the speed of light.

The Human Heart Transplant

Who: Professor of cardiothoracic surgery Norman Shumway

When: 1968

Led to: Hundreds of thousands of lives prolonged worldwide

When Stanford professor Norman Shumway performed the first successful human heart transplant in the United States on Stanford’s campus in 1968, the chief resident assisting him at the time, Edward Stinson, asked if what they were doing was even legal.

“I guess we’ll see,” Shumway replied.

Eight years later, California passed the Natural Death Act, which provided a legal basis for what the surgeons had done: removed a vital organ from a patient with brain death so that another might live.

Building on preliminary experiments in animals by early-20th-century scientists, Shumway had developed the protocol for heart transplants, which he and then-Stanford colleague Richard Lower successfully deployed in dogs beginning in 1959. After Shumway announced he was ready to perform the procedure in a human, he got scooped by South Africa’s Christiaan Barnard, a former colleague from their trainee days at the University of Minnesota. A month later, at Stanford Hospital, Shumway and Stinson transplanted a donor heart into retired steelworker Richard Kasperak. It took 25 minutes for the donated heart to start beating again.

MEET THE PRESS: Shumway (top and left) and cardiology professor Donald Harrison answer questions from reporters so eager they tried to scale hospital walls to peek in Kasperak’s window. (Photo: Chuck Painter/Stanford University/Courtesy of Special Collections & University Archives/Stanford Libraries (2))

MEET THE PRESS: Shumway (top and left) and cardiology professor Donald Harrison answer questions from reporters so eager they tried to scale hospital walls to peek in Kasperak’s window. (Photo: Chuck Painter/Stanford University/Courtesy of Special Collections & University Archives/Stanford Libraries (2))

“We put in the heart and nothing happened,” Shumway said later. “There were slow waves on the EKG and then the heart began beating stronger and then, exuberance.” Kasperak would live for two weeks.

A key part of Shumway’s initial technique was to preserve the donor heart in ice-cold saltwater to reduce its metabolism, and he continued to innovate in ways that improved the heart transplant process. To determine sooner whether the body would reject the new organ, he and his colleagues performed heart biopsies with tiny catheters. He also introduced the use of cyclosporine, an immunosuppressant that helps prevent rejection. In 1981, Shumway performed the first successful human heart-lung transplant alongside the man who would succeed him as chair of cardiothoracic surgery: Bruce Reitz, ’66. In 2022, the current chair, Joseph Woo, performed the first transplant of a beating heart from a donor after cardiac death.

“Dr. Shumway is the father of heart transplantation,” Woo said in 2025. Today, there are more than 4,500 heart transplants each year in the United States, and most recipients survive for more than a decade.

Modern Basketball

Who: Junior Hank Luisetti

When: 1936

Led to: March Madness, Steph Curry, 74 alumni who have played in the NBA/ABA and WNBA (37 men, 37 women)

It’s almost impossible to imagine, but when basketball was first invented in 1891 (as “Basket Ball”) by James Naismith, a Canadian who later founded the University of Kansas program, the proper way to shoot was standing, flat-footed, with two hands in front of your chest. If your team fouled three times consecutively, the other team got a point. You couldn’t dribble, but you could “bat” the ball with one or two hands (no fists).

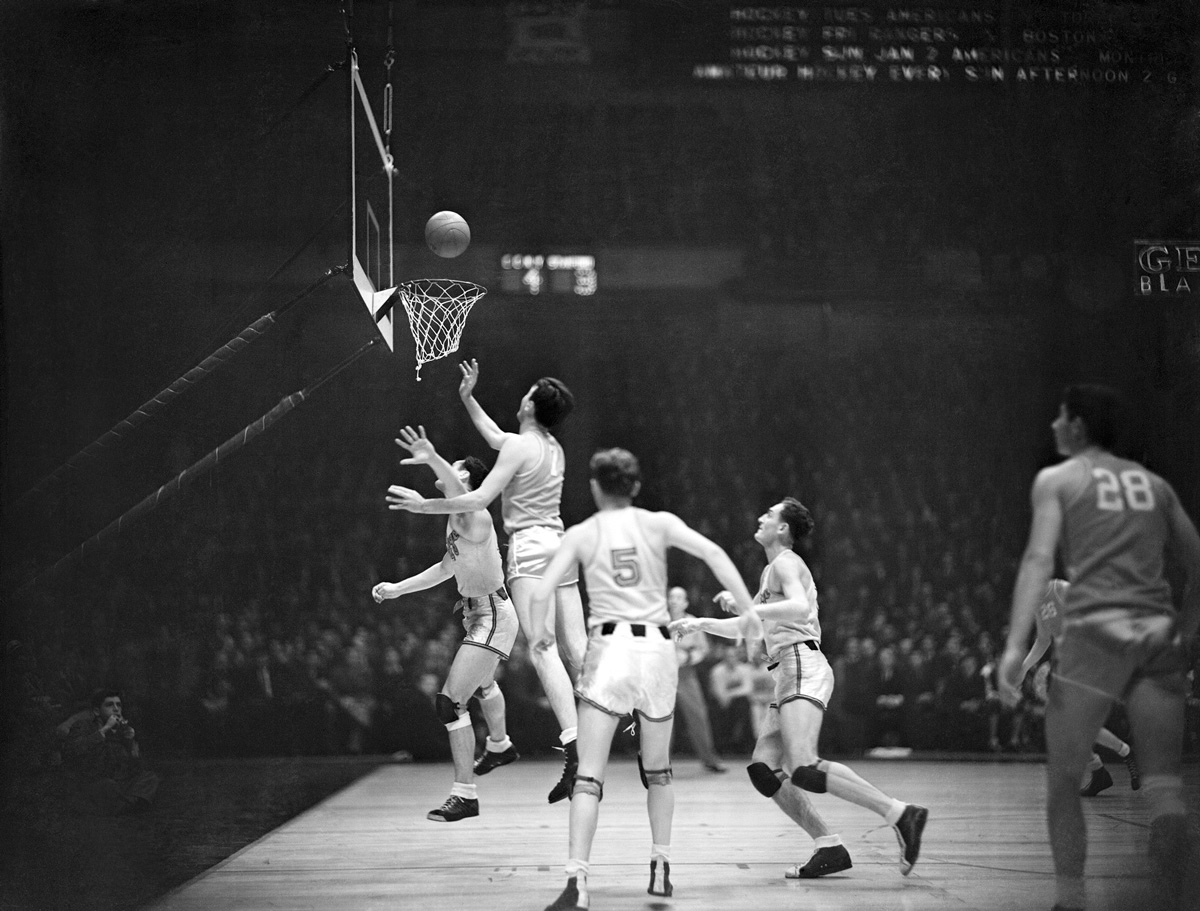

Things evolved rather quickly in ways that made the sport more exciting to play and watch. The jump shot—a 1930s innovation attributed to several players—was a literal game changer. So was Stanford’s three-time All-American Angelo-Giuseppi “Hank” Luisetti, ’38, who invented the “running one-hander” shot. “Shooting two-handed, I just couldn’t reach the basket,” Luisetti once told the San Francisco Chronicle. “I’d get the ball, take a dribble or two and jump and shoot on the way up.” The shot went the mid-century equivalent of viral—and the game of basketball changed forever—after a 1936 game at Madison Square Garden against Long Island University during Luisetti’s junior year.

The game pitted the undefeated Blackbirds—who played the traditional way with lots of passing and planned offensive plays—against the Stanford “Laughing Boys,” so called because they had so much fun playing together. Powered by Luisetti, they had the last laugh, defeating LIU 45-31 with their signature approach: playing much quicker on offense and defense, with fast breaks and full-court presses.

WEST COAST OFFENSE: Luisetti (No. 7) repeatedly put on a show at Madison Square Garden, here teaming up with the Laughing Boys to defeat City College of New York, 45-42. (Photo: Bettmann/Getty Images)

WEST COAST OFFENSE: Luisetti (No. 7) repeatedly put on a show at Madison Square Garden, here teaming up with the Laughing Boys to defeat City College of New York, 45-42. (Photo: Bettmann/Getty Images)

Media and opposing coaches were skeptical of Stanford’s style before the game; afterward, they “climbed on the bandwagon,” wrote former Stanford sports information director Don Liebendorfer, Class of 1924, in his book, The Color of Life is Red. A Sports Illustrated writer said the game was “pivotal in the sport’s history, introducing the nation to modern basketball.”

Two days before the fateful game at the Garden, the New York Times described Luisetti as “the greatest shot basketball has produced.” He also became the first college player to score 50 points in a game and perhaps the first to dribble behind his back. But his legacy—transforming a static, defensive game into a fluid, offensive one—transcends any individual accomplishment.

“Stanford played unlike any other team of its era because it had a player named Hank Luisetti,” wrote Ben Cohen of the Wall Street Journal in 2017. “Naismith was basketball’s inventor, but Luisetti was its innovator.”

The Computer Mouse

Who: Stanford Research Institute engineer Douglas Engelbart

When: 1968

Led to: Your ability to scroll through this article online

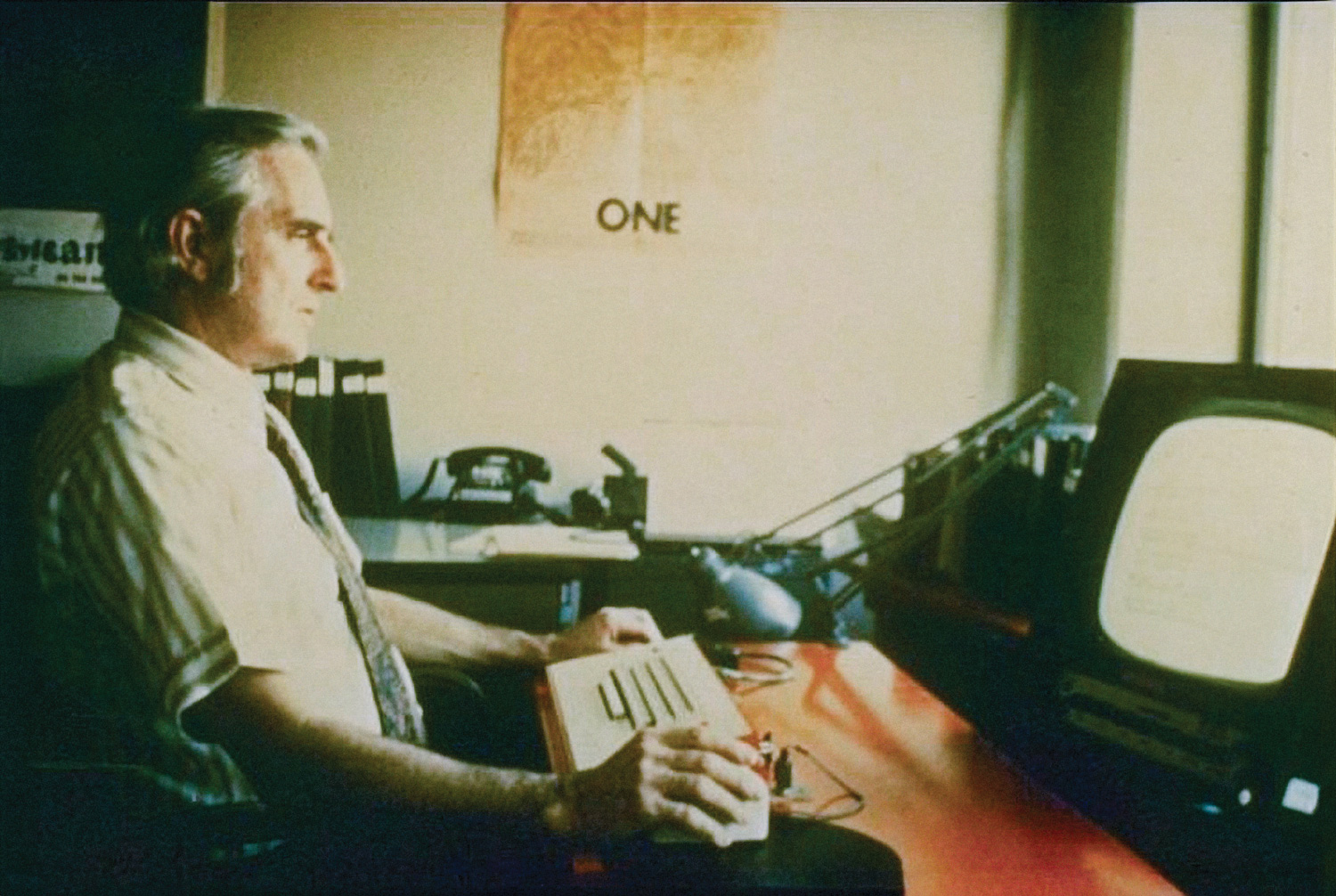

In 1968, in what has been called “the mother of all demos,” Douglas Engelbart, an engineer working at the Stanford Research Institute (part of Stanford until 1970), demonstrated how to use his invention, the “X-Y Position Indicator for a Display System.” Before a crowd of about 1,000 computing professionals, he used the handheld device, which had two wheels and three buttons, to move his cursor about the screen. It was the world’s first glimpse of the computer mouse, which, by enabling pointing, clicking, and dragging, would change the way humans interacted with computers.

POINT AND CLICK: The mouse was integral to Engelbart’s vision of human-computer interaction. (Photo: SRI)

POINT AND CLICK: The mouse was integral to Engelbart’s vision of human-computer interaction. (Photo: SRI)

“If in your office, you as an intellectual worker were supplied with a computer display backed up by a computer that was alive for you all day and was instantly responsive to every action you have, how much value could you derive from that?” Engelbart said in the 90-minute demo, a sort of TED Talk of its time. He then proceeded to use the mouse to copy, move, and paste the word “word” over and over again before demonstrating other tasks (including manipulating a shopping list his wife had given him).

In fact, Engelbart was demonstrating far more than the mouse. For years, he had imagined and played a role in conceiving ways to collaborate using computers to increase “collective I.Q.” The mouse (“Sometimes I apologize” for the name, he said in his demo) was only a small part of his grand plan to create a human-computer interface. The mother of all demos also featured hypertext linking, windows, and videoconferencing. Everything Engelbart demonstrated was later put into commercial—indeed everyday—use. The mouse made its mass-market debut in the mid-1980s at the behest of Apple’s Steve Jobs, who hired a Stanford-trained product design team to create a one-button version that could be manufactured for less than $35, paving a path that ultimately led to modern touch screens like the one in your pocket.

The Fluorescence-Activated Cell Sorter

Who: Assistant professor of genetics Leonard Herzenberg

When: 1969

Led to: T-cell counts to monitor HIV, stem cell isolation for research, personalized cancer treatments

When most people’s eyes get tired after a long day of working, they close them. Not Leonard Herzenberg. When the then-assistant professor of genetics grew weary counting immunofluorescent cells under a microscope, he didn’t just call it quits for the night and start over again in the morning; he decided to find a more efficient way to do the necessary work.

He pestered scientists at Los Alamos National Laboratory into sharing the blueprints for a machine they used to sort mice and rat cells that had been exposed to atomic bomb testing. Then he worked with engineers in the Stanford genetics department to create what they initially called “The Whizzer,” which could separate cells based on certain characteristics by directing fluorescent-dyed antibodies to attach to specific molecules.

“Separation of large numbers of functionally different cell types from the complex mixtures found in such organs as spleen, bone marrow, lymph nodes, liver, or kidney would be useful in biological and biochemical investigations,” they wrote in the resulting 1969 paper.

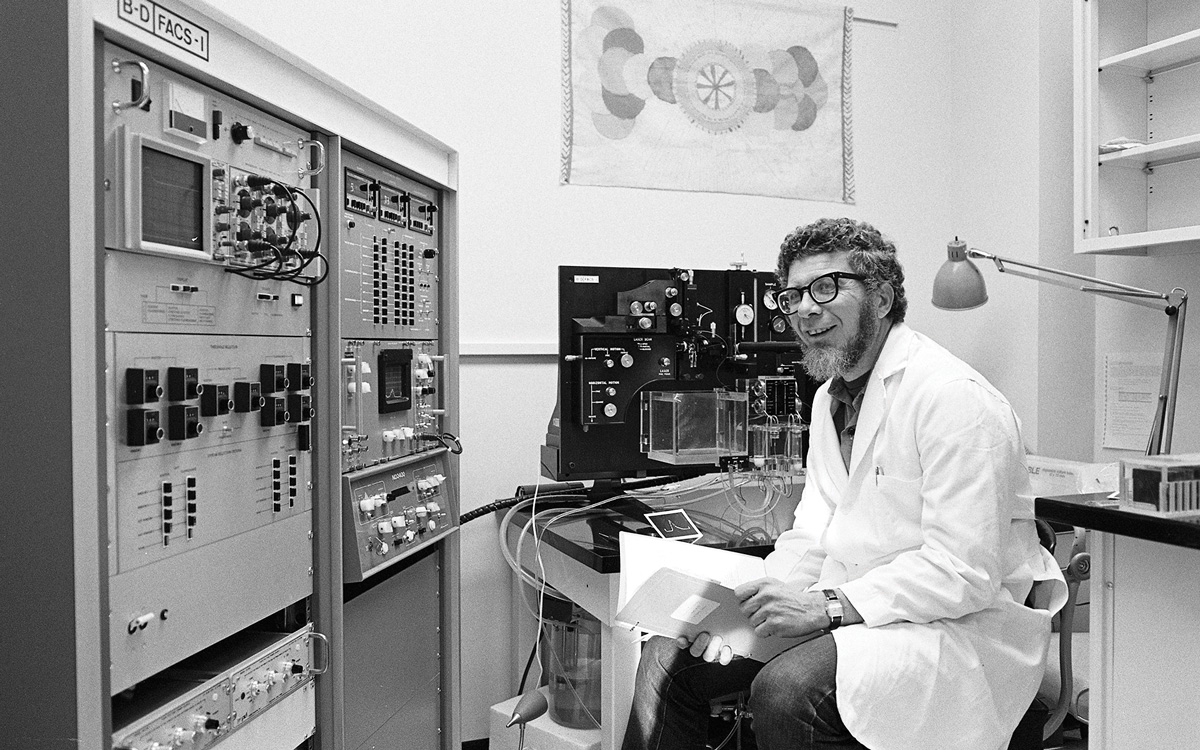

WHIZZER KID: Herzenberg developed the FACS because his eyes got tired while counting cells. (Photo: Courtesy Special Collections & University Archives/Stanford Libraries)

WHIZZER KID: Herzenberg developed the FACS because his eyes got tired while counting cells. (Photo: Courtesy Special Collections & University Archives/Stanford Libraries)

That’s putting it mildly. Their machine eventually became the first fluorescence-activated cell sorter (FACS), commonly known as a type of flow cytometer. Herzenberg’s partner in life and work, Leonore Herzenberg, was instrumental in enabling its practical applications, which today include modern cancer immunotherapies and personalized medicine, as well as stem cell research. It is used to monitor patients with autoimmune diseases, organ transplants, and HIV.

“Without the Herzenbergs, tens of thousands of people now alive would not be,” professor of pathology and of developmental biology Irving Weissman, MD ’65, a stem cell researcher and former student, told Stanford Medicine after Len Herzenberg, by then a professor emeritus, died in 2013.

The Herzenbergs—known as Len and Lee—operated a joint laboratory and continued to make major contributions to science for decades. They later created a series of monoclonal antibodies—lab-grown disease-fighting proteins that mimic the real thing—and demonstrated how to use them with FACS, then made them available to other researchers to enable them to create new diagnostics and therapeutics. Lee Herzenberg, a research professor of genetics, still runs the lab where they worked together.

Before the FACS, cell counting was “just chaos,” Lee Herzenberg said in a 2013 interview. “It was not science as we wanted to do it.” In an interview for the 2006 Kyoto Prize—for which he insisted Lee receive recognition as well—Len Herzenberg said he “could not imagine that it would be so widely used, although I did think that it would be used in immunology and in cell biology.”

He estimated that maybe 100 instruments would be sold; today, there are more than 40,000 FACS machines in medical labs around the world.

Digital FM Synthesis

Who: Assistant professor of music John Chowning, MA ’64, DMA ’66

When: 1967

Led to: ’80s pop and its progeny

At first, John Chowning, then an assistant professor of music, thought the sound he was hearing in the Stanford artificial intelligence laboratory in the “wee hours” of a 1967 night was distortion. Chowning, MA ’64, DMA ’66, was studying reverberation, building on an earlier version of surround sound, when, while experimenting with vibrato, he heard “something different.”

Think, Chowning says, of a violinist moving their fingers on the strings of their instrument, creating a vibrato—or oscillating—effect to change the pitch of the tone. If a violinist could move their fingers (or arms) much faster, the effect would be greater. Chowning could manipulate a tone to make sounds reminiscent of bells, specific instruments, fog horns, and even, eventually, the human voice.

An engineering friend confirmed that the sound—made by frequency modulation—was not a mistake. “I had created, with two oscillators, complex tones that, by any other means, would have taken many, many simultaneous oscillators,” Chowning recalls. “I had no idea of its final applications, but I knew it was a big win.”

PLAY ON: Chowning’s work led to the democratization of computer music. (Photo: Chuck Painter/Stanford University/Courtesy of Special Collections & University Archives/Stanford Libraries)

PLAY ON: Chowning’s work led to the democratization of computer music. (Photo: Chuck Painter/Stanford University/Courtesy of Special Collections & University Archives/Stanford Libraries)

It was a huge win—for Stanford and the music industry. Before Chowning’s discovery, which became known as digital frequency modulation (FM) synthesis, musicians used analog oscillators to produce novel sounds. “In the digital domain, the oscillators are basically program code,” he says. Stanford patented the technology and then—after several organ companies demurred—licensed it to Yamaha, which, with Chowning as a consultant, released the DX7 synthesizer for $1,995 in 1983.

“It just took over the market,” says Chowning, who co-founded Stanford’s Center for Computer Research in Music and Acoustics and whose earlier forays into computer-assisted music could take 20 to 30 minutes to produce two seconds of sound. The DX7 had “all sorts of attributes that were nowhere near possible in any synthesizers of the day. I was very proud to be associated with it because it democratized computer music. Before then, computer music lived in huge labs with multimillion-dollar computers.”

Digital FM synthesis became the sound of 1980s pop music. Chowning associates it most with Toto’s “Africa,” but other examples include Madonna’s “Like a Prayer,” Kenny Loggins’s “Danger Zone,” and A-Ha’s “Take On Me.”

In January, Chowning, who is 91 and continues to tour with his wife, the singer Maureen Chowning, received a Technical Grammy Award for his work as a “transformative composer and computer-music innovator.” He was honored at a merit awards ceremony alongside several music icons, including Carlos Santana, Cher, Chaka Khan, and Paul Simon.

Recombinant DNA

Who: Professor of biochemistry Paul Berg; associate professor of medicine Stanley Cohen and UCSF’s Herbert Boyer

When: 1971–74

Led to: Medications for diabetes, hemophilia, and multiple sclerosis; today’s hepatitis B vaccine; GMOs

All the ingredients for the 1971 experiment that would lead to Stanford professor Paul Berg’s Nobel Prize win were in the refrigerator of the biochemistry department.

Berg was already a well-known researcher when his lab created a recombinant DNA (rDNA) molecule by combining DNA from two different organisms—E. coli and an animal virus called SV40—using a bacterial virus called lambda phage, which infects E. coli, as a Trojan horse. He didn’t consider the work his most important, and, in fact, others at Stanford, including graduate student Peter Lobban, MS ’76, PhD ’82, were working on similar experiments at the same time.

That was the culture of the department, Berg explained in a 1997 oral history. That the ingredients were available in an open-access fridge was “a reflection of the kind of atmosphere we had in our department,” he said. “It wasn’t competitive. It wasn’t secretive.”

Berg then hit the pause button on the next rDNA study planned in his lab, an experiment by graduate student Janet Mertz, PhD ’75, to clone and propagate SV40 mutants in E. coli. On the one hand, he and others understood the potential benefits of rDNA experimentation, such as novel medications and hardier crops. On the other hand, they worried that it could inadvertently create pathogens and toxins. In 1975, Berg organized the Asilomar Conference, which led to a voluntary, self-imposed moratorium on rDNA experiments “that were considered potentially hazardous” to public health, as well as strict guidelines for continued research.

PRESSING PAUSE: Berg was the first to create a recombinant DNA molecule. Then he called a halt. (Photo: Jose Mercado/Stanford University/Courtesy of Special Collections & University Archives)

PRESSING PAUSE: Berg was the first to create a recombinant DNA molecule. Then he called a halt. (Photo: Jose Mercado/Stanford University/Courtesy of Special Collections & University Archives)

Work with rDNA had continued to progress at Stanford, including in the lab of associate professor of medicine Stanley Cohen. He and UCSF biochemist Herbert Boyer (who later co-founded Genentech) became the first to clone DNA and to transplant genes from one living organism to another. Their 1973 and 1974 papers lay the foundation of genetic engineering.

“My goal wasn’t to clone DNA,” says Cohen, now a professor of medicine and of genetics. “I was interested in antibiotic resistance.” But in order to study genes, he says, “you need enough copies to study.”

After reading an article about the research in the New York Times, Niels Reimers, who founded the Stanford University Office of Technology Licensing in 1969, convinced Cohen and Boyer to patent it.

“I didn’t think of it as an invention,” Cohen says. “It didn’t occur to me to begin with that you could patent something that was basic science. That shows you how naïve I was at the time.”

The development—and the patents—proved to be transformative: More than 400 resulting licensing agreements enabled major breakthroughs in science and medicine and brought hundreds of millions of dollars to the two universities (Reimers was inducted into the IP Hall of Fame, in part based on the Cohen-Boyer licensing program). Among the more than 2,400 products developed with the technology were drugs to treat heart and lung disease, HIV/AIDS, cancer, and diabetes.

Today, more than 8 million people in the United States rely on the first one: human insulin.

The Atomic Force Microscope

Who: Professor of applied physics and of electrical engineering Calvin Quate, MS ’47, PhD ’50, visiting professor Gerd Binnig, and visiting scientist Christoph Gerber

When: 1986

Led to: Targeted drug delivery, modern water purification systems, smartphones

There aren’t always specifically identifiable moments that mark when an idea becomes a reality, however we may yearn for cries of “Eureka!” But Professor Calvin Quate, MS ’47, PhD ’50, had at least one: In the mid-1980s, as his colleague Bob Byer, MS ’67, PhD ’69, remembered it in 2019, Quate rushed into his office to share the news that a powerful tool being developed in his lab—the atomic force microscope—was no longer just a drawing on a blackboard.

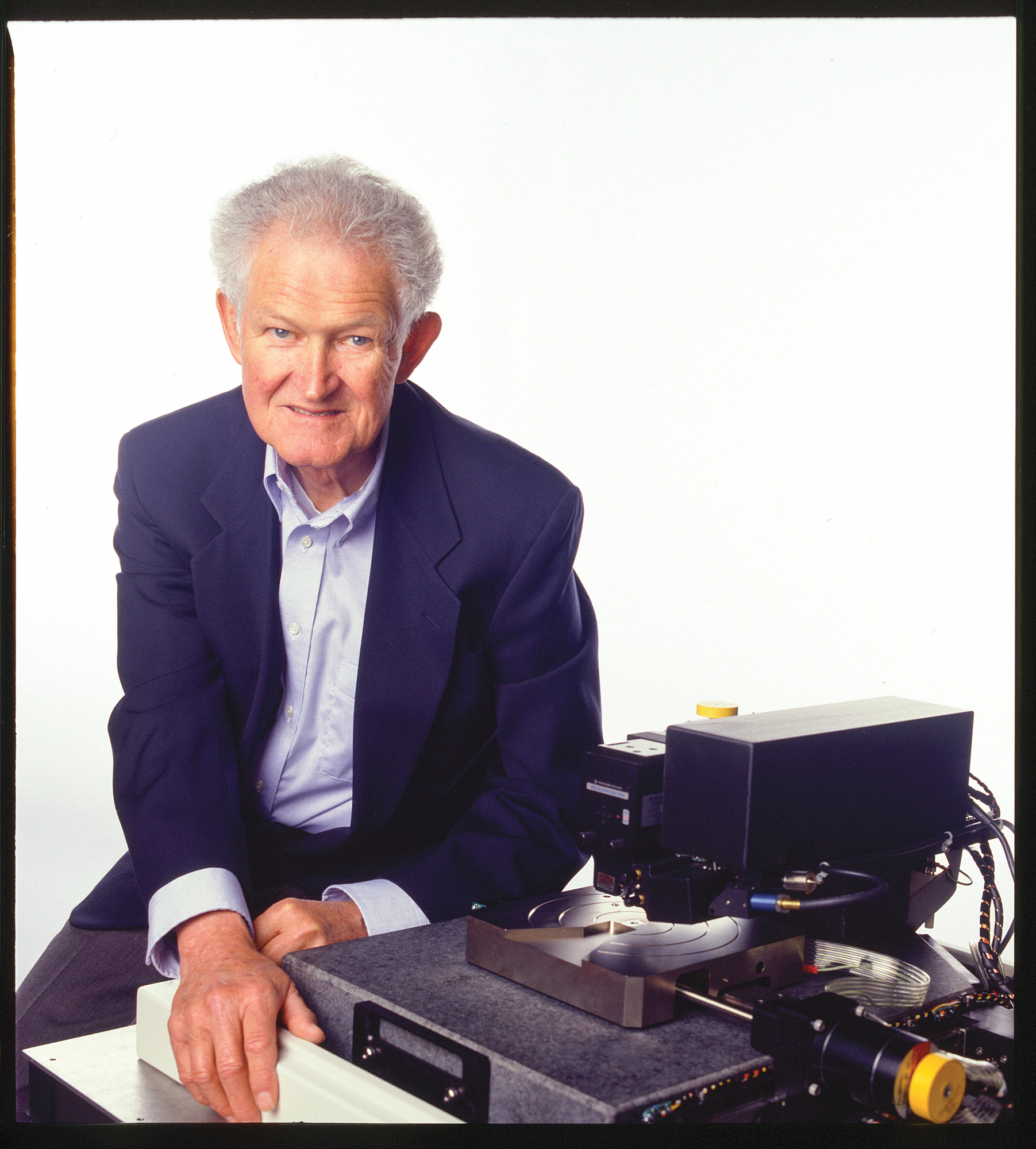

‘IT WORKS!’: Quate and his collaborators from IBM built the AFM in the mid-1980s. (Photo: Stanford Libraries; Linda A. Cicero/Stanford University)

‘IT WORKS!’: Quate and his collaborators from IBM built the AFM in the mid-1980s. (Photo: Stanford Libraries; Linda A. Cicero/Stanford University)

“It works!” Quate shouted.

Quate, a professor of applied physics, had already co-invented the scanning acoustic microscope in 1973. It used high-frequency sound waves to measure living cells without damaging them. The atomic force microscope, which Quate co-invented in 1986 after persuading IBM’s Gerd Binnig and Christoph Gerber to come to the Bay Area from Switzerland for a year to collaborate, used a nanoscale probe to create 3D images 1,000 times more detailed than existing microscopes could provide, on almost any material. The microscope laid the foundation for nanotechnology, which allows for the study of matter on the tiniest of scales (one nanometer is one-billionth of a meter, or less than 1/50,000 the diameter of a human hair). Nanoscience has since been used to watch chemical reactions, arrange atoms, and study proteins, among other previously unimaginable accomplishments. It enables precise drug delivery to tumors, the pink line on home pregnancy tests, and a whole lot of things that are “smart” (clothing, packaging, phones).

The probe of the atomic force microscope has been compared to a record player’s needle, and, in fact, the first prototype was created from a diamond-tipped phonograph needle that Binnig and Gerber smashed. They then glued a tiny diamond shard on top of gold foil to create a cantilever. Quate later built even smaller cantilevers that allowed atomic forces to be measured.

Quate was “a perfect scientific manager,” Binnig said in a 2016 interview. “If one of the team members discovered something or got some nice results, all the others gathered around and wanted to know everything about it. They were all really happy for this one person. There was no jealousy. Zero, zero jealousy. Just a real team. I’ve never seen something like that again.”

The Architecture of the Internet

Who: Assistant professor of computer science and of electrical engineering Vinton Cerf, ’65, and DARPA’s Robert Kahn

When: 1974

Led to: Do we really need to explain?

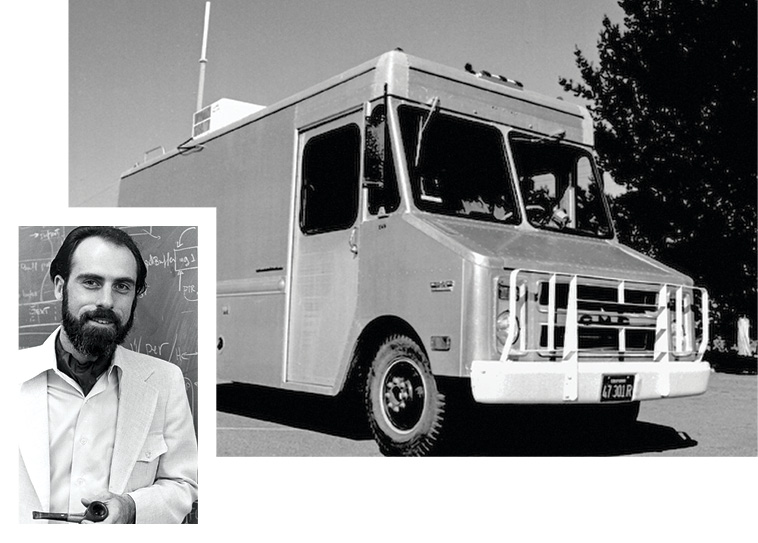

One day in 1977, Vinton Cerf and his team sent a message from a mobile radio van in the Bay Area to Cambridge, Mass., back and forth across the Atlantic Ocean, down the eastern coast of the United States, and then west to Los Angeles. The message traveled nearly 100,000 miles—counting two roundtrips to satellites in orbit—to make a trip of about 400 miles.

It was proof positive of the viability of a system—eventually known as TCP/IP, for Transmission Control Protocol and Internet Protocol—that became and continues to be the underlying infrastructure of the internet.

“That three-way network test was a very important milestone,” says Cerf, ’65.

One of the three building blocks was the Advanced Research Projects Agency Network (ARPANET), a U.S. military-funded system by which computers on a shared network could communicate with one another that Cerf had helped create. The network test demonstrated that by using the new internet architecture model, communication could flow not just within ARPANET, but across three separate networks: ARPANET plus existing radio and satellite communications networks.

Cerf was working as an assistant professor of computer science and of electrical engineering at Stanford when he co-developed the model with Robert Kahn, who had recruited him to work on “internetting” at the Defense Advanced Research Projects Agency (DARPA) in 1973. They published a paper describing the theory behind their solution to “internetwork” in 1974 before beginning to test transmission in real life. Cerf analogizes the design, which relies on so-called packet switching, to sending postcards through the mail—separate, small, addressable bursts that each arrive at their destination. And even though the speeds of transmission have gotten much faster and the number of networks has grown dramatically, that analogy still holds true today. (In 1974, Cerf and Kahn guessed that 256 networks would be “sufficient for the foreseeable future”; today, there are tens of thousands of autonomous networks around the world.)

FOLLOWING PROTOCOL: The test transmission of a message among three networks, says Cerf (left), was “a very important milestone.” (Photos, from top: SRI International; Courtesy Special Collections & University Archives/Stanford Libraries)

FOLLOWING PROTOCOL: The test transmission of a message among three networks, says Cerf (left), was “a very important milestone.” (Photos, from top: SRI International; Courtesy Special Collections & University Archives/Stanford Libraries)

“The whole idea was to try to put the internet protocol on any underlying transmission system that was invented, trying to future-proof the architecture of the internet,” Cerf explained in a 2007 oral history.

The implications of their simple but flexible protocol became clearer in the 1980s, when Cerf helped connect networks beyond the government and affiliated university researchers, ushering in an era of communication connecting every corner of the globe using evolving technologies that have ranged from AOL to Zoom and show no signs of stopping any time soon.

“It’s still going strong,” Kahn said last year. “I think we kind of nailed it.”

Cerf left Stanford for DARPA in 1976 and continues to work on internetting a half-century later. Since 1998, he has been a visiting scientist at NASA’s Jet Propulsion Laboratory, where he collaborates on an interplanetary network that can handle the delays and disruptions associated with space travel. He’s also chief internet evangelist at a company that wouldn’t exist—and wouldn’t have been necessary—without his pathbreaking work: Google.

Answer below or at: How many of these did you know were created here?

Rebecca Beyer is a freelance writer in the Boston area. Email her at stanford.magazine@stanford.edu.