Assembly-line manufacturing informed the first generation of robots—big, stationary machines programmed to perform specific industrial tasks. Meanwhile, researchers pursued the development of robots that could perform delicate tasks and interact safely and deftly with humans. Today, research is producing a generation of lightweight, nimble, “soft-touch” robots that, if far from the ’60s-era vision of a Jetsons-style robot maid, also far surpass it. Here are five Stanford robots that have helped define the future of robotics.

Shakey

Stanford Research Institute Artificial Intelligence Center, 1972

The first autonomous mobile robot that could perceive and make decisions within its environment, Shakey captured the attention and imagination of a world eager for robots that would coexist with humans. Developed at the Stanford Research Institute, which separated from the university in 1970, it was named for the wobbly way it moved around on a wheeled base. Shakey used built-in sensors, a camera and a range finder to navigate around objects and through doorways. It also could combine simple actions to perform more complex tasks that required planning, such as pushing a block off a platform.

Photo: Linda A. Cicero/Stanford News Service

Photo: Linda A. Cicero/Stanford News Service

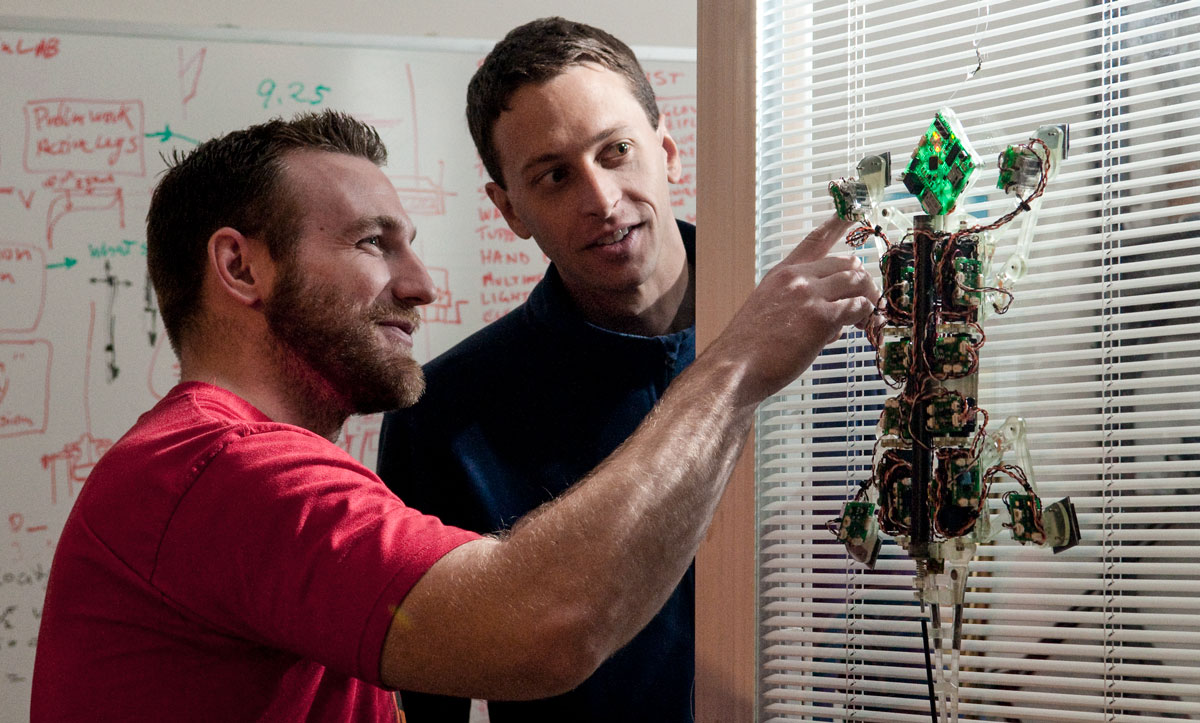

Stickybot

Stanford Biomimetics and Dexterous Manipulation Lab, 2006

The adhesive pads on the feet of this agile robot aren’t actually sticky. Molecular attraction is what makes them adhere to walls, a design inspired by the toes of the gecko. The adhesive technology has been harnessed to help drones lift heavy objects and allow humans to scale walls Spidey-style, and is slated for a gig cleaning up debris in space. As for Stickybot, which has 19 motors powering its intricate wall-crawling, it could be used for search and rescue, inspection and surveillance. After all, who wouldn’t want to be a gecko on the wall?

Photo: Osada/Seguin/DRASSM/Stanford University

Photo: Osada/Seguin/DRASSM/Stanford University

OceanOne

Stanford Robotics Lab, 2016

Meet OceanOne, a humanoid robot designed to perform underwater tasks that would be dangerous or impossible for human divers. The robot, which has two fully articulated arms and a mermaidlike “tail,” is fitted with sensors that relay haptic feedback so that the pilot at its controls can feel what the robot is touching as it carefully picks up a vase from a centuries-old shipwreck or places underwater sensors in delicate coral reefs. “When the robot touches the object on the seafloor, you can feel exactly what the robot is touching,” says OceanOne’s creator, computer science professor Oussama Khatib, who directs the Stanford Robotics Lab. “And human cognition and reasoning are projected down into the bottom of the ocean.”

Photo: Amanda Law

Photo: Amanda Law

JackRabbot2

Stanford Vision and Learning Lab, 2018

Cute by design, the socially savvy JackRabbot2 studies the etiquette of human interactions in an attempt to learn how to move among people. That means respecting personal space, yielding the right-of-way, and signaling “no, after you!” with a gesture of its robotic arm or a blink of its expressive digital eyes. Multiple sensors and a deep learning algorithm keep JackRabbot2 navigating skillfully around pedestrians—not to mention the Farm’s bikes, scooters and skateboards—as it collects data that will inform robots’ ability to maneuver safely and autonomously among people in busy environments. Among its latest tricks: posing for selfies and receiving hugs.

Photo: Linda A. Cicero/Stanford News Service

Photo: Linda A. Cicero/Stanford News Service

Doggo

Stanford Student Robotics Club, 2019

It bounces, trots and does backflips, but what may be most endearing about Doggo, a four-legged robot created by students, is that it’s open-source. Its free plans and software offer a versatile, lightweight mobile base that, with the addition of sensors, a camera and arms, could be used for applications as diverse as search and rescue or package delivery. Legged robots are popular with researchers, but “they’re very expensive to build, and the designs are never released,” says Patrick Slade, MS ’17, a mechanical engineering doctoral student who mentored the student team. “We designed a very capable system while keeping fabrication costs and the required tools to a minimum so other interested groups could replicate it.”

Charity Ferreira is a contributing editor at Stanford. Email her at stanford.magazine@stanford.edu.